For a long time, making a good video meant accepting a lot of friction. You needed an idea, then a script, then visuals, then audio, then editing, and often several different tools just to get one short piece finished. That is why an AI Video Generator Agent feels meaningful in a more practical way. It is not just about turning text into motion. It is about giving creators one place to move from rough concept to something closer to a finished video without losing momentum at every step.

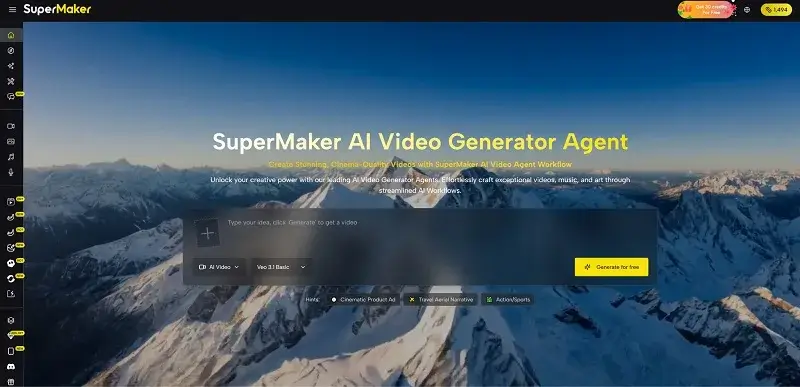

What makes this more interesting today is that expectations around AI video have changed. People are no longer impressed by motion alone. They want cleaner workflows, better visual quality, more reliable image-to-video results, and access to the strongest models without having to learn five different systems. In my experience, that is where SuperMaker becomes easier to understand. It presents itself less like a novelty tool and more like a platform built around serious creative production.

What Makes The Platform Feel More Capable

A lot of AI video products still feel like separate demos stitched together. One tool handles text-to-video. Another handles image to video. Another gives you audio. Another is better for image generation. That can work, but it also creates fatigue. SuperMaker takes a different route by putting these parts into one environment.

Video Creation Sits At The Center

The platform is built around AI video creation as the main experience. That includes both text-to-video and image-to-video, which matters because many projects do not begin from text alone. Sometimes the starting point is a reference image, a product photo, a character design, or a storyboard frame that needs motion.

Image Tools Strengthen The Workflow

This is where the platform starts to feel more complete. Instead of forcing users to leave and build assets elsewhere, it also provides access to high-end image models. That matters because image quality often determines whether image-to-video results feel convincing in the first place.

Audio Makes The Output More Usable

Voice and music are also part of the system. That may sound like a secondary detail, but in practice, it is often the difference between a rough test clip and something that can actually be published or presented. A silent generated scene may look good, yet still feel unfinished.

Why Top Models Change The Experience

One reason SuperMaker stands out is that it puts some of the most talked-about models in one place. For users, that changes the platform from being just another interface into something closer to a creative hub.

Seedance 2.0 Pushes Image To Video Forward

Seedance 2.0 is one of the biggest reasons the platform feels current. It is presented as a leading video model with strong motion quality, multi-shot storytelling ability, and better audio-visual synchronization. For people who care about image-to-video quality, that matters a lot. In my testing of platforms built around this kind of model, the difference is often visible in motion continuity, camera feel, and the way scenes hold together instead of breaking into disconnected fragments.

Nano Banana Pro Expands Visual Starting Points

Nano Banana Pro adds another layer of value because it gives creators access to a very strong image model before the video even begins. If image-to-video depends on the quality of the source image, then strong image generation is not a side feature. It is part of the video workflow. That is why having Nano Banana Pro on the same platform makes practical sense.

Veo 3.1 Raises The Ceiling For Video Quality

Veo 3.1 is another important piece. SuperMaker presents it with native 1080p quality, audio integration, and first-last frame effects. For creators, this means the platform is not relying on just one model identity. It is giving users access to multiple top-tier engines with different strengths, which is often more useful than being locked into one generation style.

How The Official Workflow Actually Works

The platform keeps its core process simple, and that is part of the appeal. Instead of overcomplicating the experience, it describes the workflow in a straightforward sequence.

Step One Begins With A Prompt

Everything starts with a prompt. The user enters a concept, scene idea, or story direction. This is the creative brief for the system. It does not need to be written like code. It works more like a natural direction.

Step Two: Generates The Visual Output

The next step is generation. This is where the chosen model or workflow turns the prompt or source material into a video. Depending on the project, that may involve text-to-video or image-to-video.

Step Three: Adds Music And Voice

After the visuals are created, the platform moves into enhancement. This stage brings in audio layers like voice and background music, which makes the project feel much closer to a finished media asset.

Step Four Refines And Publishes

The last step is publishing. This is where users can make final adjustments and export the content. It is a small detail, but it reflects a realistic understanding that generation is not the end of the process.

How Different Models Serve Different Needs

One of the easier ways to understand the platform is to see how different model options support different kinds of work.

| Model Or Capability | Best Understood As | Practical Strength |

| Seedance 2.0 | Advanced video model | Strong motion, multi-shot scenes, polished image-to-video feel |

| Nano Banana Pro | High-end image model | Strong source images for visual ideation and animation workflows |

| Veo 3.1 | Premium video model | 1080p output, audio support, stronger cinematic polish |

| Voice tools | Audio layer | Adds narration and presentation value |

| Music tools | Atmosphere layer | Helps clips feel complete rather than silent drafts |

Who This Platform Is Most Useful For

This kind of setup is especially useful for people who want strong results without building a complicated stack on their own.

Solo Creators Gain More Range

A solo creator can move from idea to publishable short video much more easily when images, motion, voice, and music are all in one place. That does not make the work effortless, but it does remove a lot of switching and setup.

Marketers Can Move Faster

For marketers, the value lies in speed and flexibility. A product concept can begin as an image, evolve into a video, and then be completed with audio, creating a dynamic content workflow. This rapid process also supports the role of user generated content in building trust, as brands can quickly incorporate authentic visuals, videos, and feedback from real users into their campaigns. As a result, the path from concept testing to campaign-ready content becomes much shorter and more effective.

Small Teams Benefit From Fewer Handoffs

Small teams often do not have the time to coordinate multiple production tools. A platform like this lowers that coordination burden. That can matter even more than raw model quality in day-to-day work.

Experimentation Becomes Easier To Sustain

When strong models like Seedance 2.0, Nano Banana Pro, and Veo 3.1 are already available inside the same environment, experimentation feels less expensive in terms of time and attention. Users can compare directions without rebuilding their workflow each time.

Why A Balanced View Still Helps

Even with top models, no platform removes the need for creative judgment.

Good Prompts Still Matter

Better prompts usually produce better results. A strong model can help, but it still needs clear direction if the output is going to feel specific rather than generic.

Some Results Need Multiple Tries

That is still true even with leading models. In my view, the advantage here is not that every generation becomes perfect on the first attempt, but that the platform makes iteration easier and less frustrating.

The Best Value Is Workflow Confidence

What SuperMaker really offers is not just access to powerful models. It offers a more confident way to use them. That may be the real reason platforms like this are becoming more attractive. Creators do not only want the best engine. They want a place where the best engines fit naturally into the way they already work.

Why This Direction Feels Important Now

The broader trend is clear. AI creation is moving from isolated tool demos toward connected creative systems. SuperMaker fits that shift well because it combines video, image, music, and voice inside one environment while also giving users access to some of the strongest current models, including Seedance 2.0, Nano Banana Pro, and Veo 3.1.

That combination makes the platform easier to understand in real-world terms. It is not only promising faster generation. It is trying to make modern AI production feel less fragmented, more approachable, and much more usable for people who want to create consistently rather than just test a single clip once.